Artificial Intelligence is a matter of chips: processors vs memory cards

30 JUN, 2023

By Anjali Bastianpillai, Senior Product Specialist, Pictet Asset Management

Artificial Intelligence (AI) is particularly demanding in terms of resources and requires large amounts of data and processing power to create new content. Processors are obviously the main beneficiaries of AI, but large language models (LLMs) also require adequate memory capacity and bandwidth.

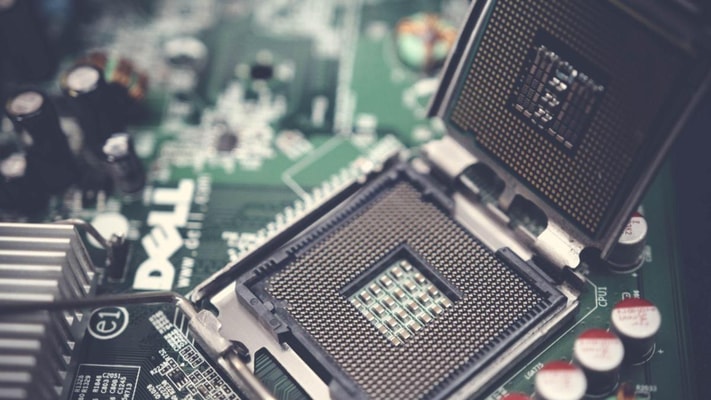

The chips found in memory cards and computing and storage systems represent the largest share of sales in the industry. Memory chips (DRAM) and storage chips (NAND Flash) are used to store data and instructions, while processor chips (such as the CPU of a computer or the GPU) are used to perform calculations and process data in real time.

With a 10% compound annual growth rate (CAGR) over the past 10 years, the memory chip market (accounting for 26% of the total semiconductor sales revenue) is expanding due to increased smartphone usage, sophisticated digitalization, and the semiconductor industry's broader application in sectors such as automotive and IT. However, the development of LLMs has raised the bar, requiring significantly higher levels of memory and storage that match the size of databases.

Memory capacity and speed are becoming the main bottleneck in the development and training of new AI models.

The use of new technologies is helping optimize this process, accelerating data transfer, improving performance, and increasing energy efficiency. Micron Technology is among the leading companies in this field. Its semiconductors are used in various sectors, from consumer electronics to data storage servers, networking systems, integrated electronic systems, and automotive applications.

At Pictet Asset Management, we seek out companies capable of leading the industry towards enhanced memory chips in terms of size and speed, benefiting from the increased demand for memory used in servers due to the implementation of AI.

The logic processor market (accounting for 42% of the total semiconductor sales revenue) is also experiencing continuous growth thanks to technological advancements that lead to higher productivity, lower energy consumption, and superior quality and reliability. This, in turn, improves the overall price/performance ratio of processor chips (known as Moore's Law), enabling greater utilization of electronic devices.

AI is a new application made possible by improvements in logic processors, but it is also a driving force behind the demand for both AI algorithm processing servers and end-user devices that interact with processed models. These devices can range from traditional desktops and smartphones to embedded devices such as robotic arms or autonomous driving-capable vehicles.

Nvidia, a US leader in semiconductors, has been one of the companies that has benefited greatly from the demand for higher-computing-capability electronic components needed to power AI algorithms. Its high-level data center accelerator chips, which now account for over half of Nvidia's revenue, are optimized for high-speed parallel processing required for AI training. These chips integrate AMD's CPU core processors, which have gained significant market share compared to their long-time rival, Intel, by aligning their business in this direction in recent years.

In conclusion, semiconductors are the backbone of technological innovation. They enable the functioning of virtually everything, from smartphones and laptops to servers and supercomputers. The expectations for this industry are extremely high, with estimates of trillions of dollars in growth. Specifically, it is estimated that 70% of the growth will be related to the automotive industry (especially electric vehicles), data storage, and the wireless industry.